As you likely know, collecting data during a systematic review and working with that data after it has been extracted are two very different things. If you don’t consider how you will use the data as you’re designing your screening and data extraction forms/instruments, you many end up collecting it in a format that is tough to work with down the road.

This post offers two simple form design tips that will help to leave you with data that is cleaner and easier to work with.

Tip #1: Use Closed-Ended Questions Whenever Possible

Often you will see free-form text fields used to capture things like study type, publication language, or country. Generally speaking, if the set of all possible answers to these questions can be defined in advance, the practice of reviewers typing in text answers is suboptimal – here’s why.

Let’s say, for example, that you are asking about the study type and that the answer is “RCT”. If you provide your reviewer with a text field in which to enter this value, you may get things like “Randomized Trial”, “RCT”, “Randomized Controlled Trial”, etc. All of these answers are the same, but since they have been entered in different formats, you can’t use these values to sort/search response sets by study type, count the number of RCTs, etc. You will have a lot of manual data cleaning to do before you can use the result sets for any sort of analysis.

By contrast, if you had used a multiple choice list for study type, the value for RCTs would have been entered the same way every time and your data set would not require any cleaning – at least not for this question.

The comment I hear most frequently when discussing the merits of closed-ended questions is, “What if there is an answer that the study designer has not anticipated and is therefore not in the list? How we we capture that?” This problem is easily solved by adding an “Other” option and a text field to capture any outliers. Better yet, some software will allow reviewers to add additional responses to form questions as they review, thus expanding the list of options as needed while keeping the values consistent.

The bottom line is, the less you ask your reviewers to type, the cleaner your data will be.

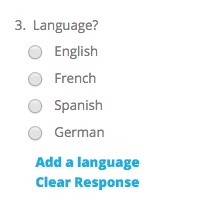

Tip #2: Use Validation on Text Fields

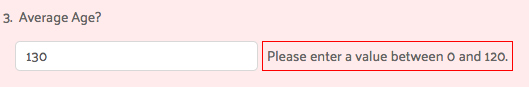

Of course, there will always be questions that cannot be closed-ended, for which reviewers must type in answers. For these cases, there are a few things you can do to help guide the reviewer to giving you the data you want, in the format you need.

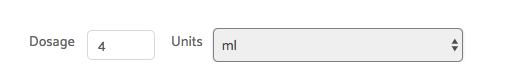

One example that frequently comes up is numeric input for things like subject counts or age. For numeric fields, you have a few options to help ensure the captured data is clean. First, you can use in-form validation to force the user to enter only numeric characters into the field. This is particularly useful in preventing the entry of units of measurement (e.g. 10ml) which will mess up any mathematical operations that you want to perform on this field at analysis time. It’s much better to have a separate closed-ended question to capture units, allowing you to perform mathematical operations on the data without manual clean up.

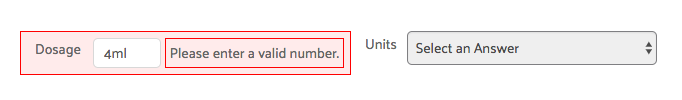

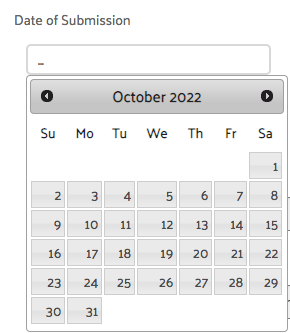

No discussion about validation would be complete without touching on dates, the data type that is perhaps the most inconsistently formatted. There are simply too many ways to record dates, and they vary from country to country, organization to organization, and even person to person. To capture dates consistently, here are two good options:

- Use separate numeric fields for day, month and year

- Use a date picker that always saves date entries in the same format (almost like a closed ended question)

Without some enforcement of format, you are almost certain to end up with date issues, especially if you have more than a few reviewers. Using one of these techniques should help.

These are just two of many simple ways to help capture better data by crafting better questions. In a future post, I’ll share some strategies for dealing with repeating data sets (possibly the most challenging type of data to work with, both at the review and analysis phases). Until then, I hope you found these tips for using closed-ended questions and text field validation useful, and l look forward to your feedback.