In a recent webinar, DistillerSR customers Christa Goode, Director of Clinical and Medical Affairs at Johnson & Johnson, and Sara Garbin, Senior Clinical Development Scientist at Philips, were joined by Dr. Bassil Akra, founder and CEO of AKRA Team GmbH, to discuss the challenges of developing a robust surveillance program while managing unprecedented volumes of research and gray literature combined with tight submission deadlines.

Q: Dr. Akra, can you please set the stage for this conversation from a regulatory perspective?

A: The global regulatory landscape is ever evolving, and there’s a real need to reevaluate and restructure our processes to understand how we define acceptance criteria and specifications for medical devices that we plan to launch and keep in the market. The evidence is the starting point to answer all these questions, and thus literature reviews have become critical in defining key safety and performance criteria and supporting the determination of the risk-benefit profile. This applies for EU MD and IVD regulation when considering clinical evaluation report (CER) and performance evaluation report (PER) compliance as well as post-market surveillance (PMS) compliance. The regulations were published back in 2017, so we’ve now gone through a transition period when everyone was trying to understand it and apply it to their own portfolio.

Now, five years later, manufacturers are ready to get their certification for the European market. Capacity, however, is a big problem for notified bodies at the moment. There are more submissions than staff can handle and waiting times to receive final compliance approvals are getting longer. This is why manufacturers need to have a clear storyline to build the acceptance criteria that points to the evidence supporting their device’s purpose and risk-benefit profile. Furthermore, manufacturers have to clearly demonstrate the scientific validity of their criteria while keeping up with the latest evidence on both new and legacy devices. It’s critical to ensure a long-term post-market strategy for continuously monitoring the literature across the medical device portfolio.

Many manufacturers are looking to sell their devices in multiple markets, so they need to address requirements that are potentially distinct in Germany, Italy, France, and the United States, or China and Japan. The new EU MDR is pushing for more regular updates, beyond the previously accepted EU Medical Device Directive (MDD) annual frequency, and therefore companies have to reevaluate their processes and implement efficiencies whenever possible. And hence the topic of this webinar: how can we move forward making use of automation to streamline the literature review management process while checking the regulatory boxes and ensuring a sustainable corporate strategy?

Q: What are the main pillars of post-market surveillance, and how do they relate to regulatory compliance?

A: Post-market surveillance is really important, especially when you’re trying to assess the clinical performance of your device. As we all know, we need to be attuned to any potential safety issues, and there’s only so much you can determine based on bench testing and clinical trials. The moment your device is used in a clinical setting, you start accumulating real data about its performance. You need to cast a wide net that goes beyond the traditional complaint reporting system to collect your evidence and try to capture incidents that might flag potential new risks to be considered.

Q: So is it fair to say that you need to learn to anticipate and identify signals of a potential risk because you don’t want to wait until damages occur? And that as an organization, you want to be perceived as committed to continuous improvement?

A: Exactly. And that’s where the continuous nature of post-market surveillance comes into play. You’re always monitoring the market and your other sources of information to get a complete picture of your device’s performance and predict any issues that may arise.

Q: Christa, how do you see this issue from your perspective? How do you look for safety signals related to post-market surveillance in the clinical evaluation process?

A: At Johnson & Johnson, we looked into post-market surveillance requirements and realized that literature reviews can support PMS performance and safety analysis. From there, we then identified key outcomes that our team should be focused on from a clinical standpoint and from a risk management perspective.

PMS compliance also requires that you assess misuse for every device, and we found that monitoring the literature was particularly useful in determining whether a particular instance of misuse was a one-off event or a trend that needed investigating by our team. The other instance supported by literature is risk management. We go back to the supporting documentation and ensure continuous comprehensive coverage of potential risks. The summary of this data is your risk-benefit analysis. And you can extrapolate this data into your state-of-the-art (SOTA) comparison. You can extract data from literature to find similar devices and how they compare to yours.

As you can see, we basically went through the core pillars of post-market surveillance and realized that literature reviews have a supporting role in all of them. It goes far beyond just randomly scanning for adverse events. You can create a robust ecosystem with your cross-functional team members to comprehensively address all the requirements by setting up regular literature searches to keep up with the latest evidence.

Now, tying all of these aspects to the regulatory dimension, we recently underwent an audit with a notified body. We had one last October and another one, coincidentally, last week. It was a two-day audit in total and our literature monitoring process was center stage for a day and a half. We spent six hours on literature reviews and how they funnel through the CER process and the post-market surveillance process. Because we systematically addressed those five pillars—safety, performance, misuse, risk, and state-of-the-art—using literature reviews, it made for a very easy audit.

Q: It’s definitely very interesting to see how literature and literature reviews are contributing to the compliance process and how notified bodies are focusing on the technical aspects of clinical requirements. What about you, Sara? Are you facing these same challenges?

A: At Philips, we’re not quite where Christa and her team are in terms of approach. We’re working towards getting there. Three years ago, we were still doing literature reviews manually. There’s a whole set of challenges deriving from these manual processes, but the main one is volume. The challenge for us right now is to implement DistillerSR widely throughout Philips. We’ve reached a point where it’s clear that conducting literature reviews manually while trying to keep up with the current volume of evidence we’re monitoring is no longer sustainable.

I agree with Christa that the focus of notified bodies has absolutely shifted. In the audits we’ve been through so far, there’s a very clear focus on literature reviews. Making sure that your searches are robust and comprehensive is critically important, especially when you’re dealing with high-risk devices. Relying on the accuracy of the results of our literature reviews is paramount for proving that we’ve done our due diligence in ensuring the safety and effectiveness of a medical device.

Q: Going back to what Christa said, then, we need to have a different kind of literature review. We need to employ automation to simplify the process and to build a systematic, active post-market surveillance strategy that continuously collects data and keeps us informed. For some devices, for example, you could be monitoring thousands of publications, which highlights not only the volume of evidence but also the task repetition that your team could be facing. What’s your experience, Christa?

A: We know that we don’t have a year to submit a CER—we have three months. So we know we have about two months to complete a literature review from beginning to end, including developing the protocol, instituting good data management practices, analyzing the data, and putting it into a report. How do you accomplish that? The key is to have efficient processes and then overlay those processes into a software platform. If you don’t have sound processes and you don’t have a software platform that can automate your literature review stages, it won’t happen. Your three-month deadline is shot.

More importantly, it’s not just about looking at the literature within a single article. It’s looking across articles for the same piece of evidence. How do you do that? You need multiple people working collaboratively and well trained on the software platform.

Basically, it’s about how to ensure quality, timeliness, and regulatory compliance while making good budget decisions and continuously assessing your ecosystem for improvements.

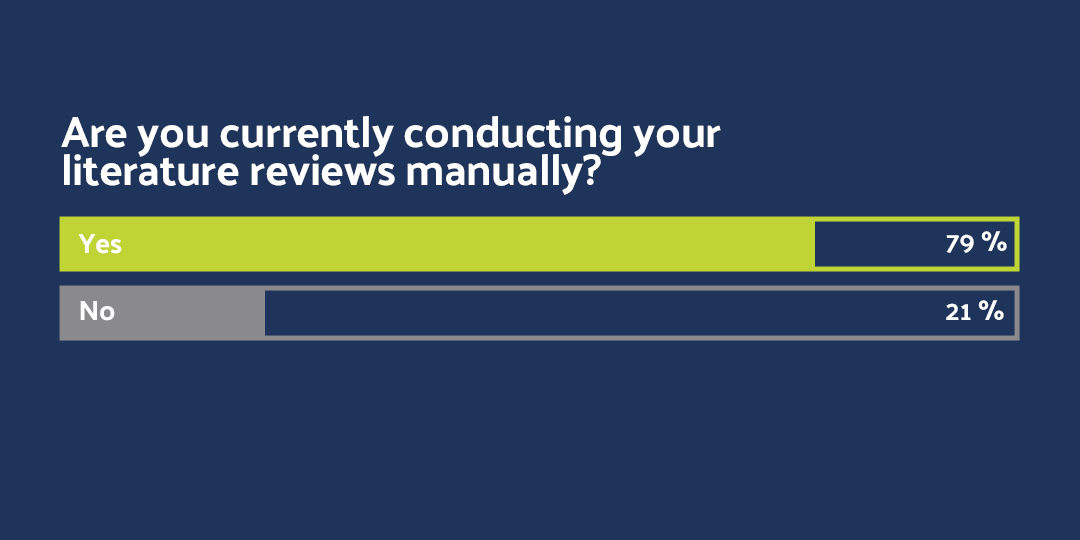

Q: So let’s take a look at our poll results and see if our audience is currently employing manual processes or leveraging automation to complete their CER/PER submissions. It seems 79% of the attendees is still doing their literature reviews manually. Sara, what’s your takeaway here? How can automation help you?

A: I tell the following story very often to illustrate why automation is a better way to approach literature reviews. I had a 712-page Word document with titles and abstracts, and I had to include comments with exclusion reasons for each one of the references. It was a very tedious process and would cause my computer to crash every time I tried to open the document. It was a nightmare.

So first of all, if you’re working with this volume of literature, you end up with something that’s extremely error prone. It’s very difficult to manage, whether you’re using Excel or Word, and there’s no audit trail. I know that because I had to go back to those 712 pages and manually count up my exclusion reasons three or four times to make sure the numbers matched. The risk of error is enormous, and that’s where automation comes in and offers a more efficient process.

Some people will say they have a standard way of doing things. But really, all it takes is one extra space in a spreadsheet and suddenly your whole process is thrown off and you have no idea when or where it happened.

If you look at the case study we released with DistillerSR, you’ll see that DistillerSR’s automated workflows helped us reduce title and abstract screening time by 74% and full-text screening time by 70%. A lot of that was just about having the title and abstract in one screen and having the ability to click on buttons as opposed to thinking about these individual exclusion reasons and typing all of them out. It’s been a huge time saver and it’s lifted an enormous mental burden off the team. Simply put, manual processes are unsustainable for the volume of evidence we’re dealing with.

Q: I’m pretty sure your C-level is very happy about the times savings you just highlighted. Increased efficiency translates into having your medical writer focused on research instead of Excel spreadsheet management. This is an actual example of how the technology can facilitate your workflow. Christa, you’ve been doing this for many years at Johnson & Johnson, a large organization. What’s your experience? Is employing automation and smart workflows the way forward for small and big companies as well as distributed teams all over the globe?

A: That’s a very interesting question, Bassil, and it’s something I’ve had in my head for a very long time, because I’ve had to teach a lot of individuals on my team why automation is important and why software tools are important, and these people had no previous experience using technology to do their work. I had to go through this same process myself. I used to manage clinical studies. I knew the importance of good data management practices, and I knew that we should not do paper case report forms. That was back in 2000.

Step one in the journey towards embracing automation is teaching people in your organization about the reason behind the change. If you don’t have a strong leader in your organization who gets it, it’ll be really challenging. The second step is to spend time learning the platform, which is especially important if you’re not a software person. In our case, we reached out to Evidence Partners and implemented DistillerSR. EP’s customer support team built a path for us, and we committed time to learning the software, configuring our templates, and making it work for us.

If you have DistillerSR, regardless of what type of literature you’ll monitor, how complex your process will be, and how big your team, you can customize it to meet your needs. For example, you can set it up to simply scan for similar device and state-of-the-art data for your target device. Or you can configure the platform to do title and abstract screening. The bottom line is that you need to align yourself with a strong leader who’s not afraid of change, and you need to commit time to learning the platform. There’s definitely a learning curve, but you’ll see the benefit and it will pay off in the long run.

Q: Thank you, Christa. That is a very strong message: commitment, willingness to change, and leadership buy-in. Sara, tell us a bit about the picture at Philips. What kind of operational efficiencies do you have in place as an organization to streamline data gathering and analysis?

A: We’ve been utilizing DistillerSR pretty heavily in our literature reviews. And it has been single handedly the biggest driver for operational efficiencies that we’ve introduced in the clinical evaluations team to date. The key is knowing that DistillerSR can be configured to match your existing processes. We conducted a pilot study to analyze our core data gathering process and identify specific areas for improvement. Ultimately, implementing a smart workflow system to set the cadence for our literature reviews and standardize the search parameter strategy was key to driving efficiency.

To reinforce what Christa said, it’s critical to have that strong champion who will drive the adoption of the platform within the organization. My colleague, Michael Klopfer, likes to say that we’ve never deployed DistillerSR to a group and had them come back and tell us that they prefer doing reviews manually. There’s a learning curve, and we’ve implemented an in-house training program where anyone within Philips can reach out and get trained on the new system. Having an internal DistillerSR support system seems to make people more likely to engage.

“We’ve never deployed DistillerSR to a group and had them come back and tell us that they prefer doing reviews manually. There’s a learning curve, and we’ve implemented an in-house training program where anyone within Philips can reach out and get trained on the new system. Having an internal DistillerSR support system seems to make people more likely to engage.”

Going back to the workflows, even the smaller features that you’re able to set up automatically make a big difference. We’re doing literature searches on a monthly basis with the same search terms. You can set up that search to run automatically in the DistillerSR interface so you don’t have to rerun the same search from scratch. We just set up a monthly alert and have the references pulled right into the system. It might sound like a small task, but those little things are actually saving a ton of time. When you factor in the number of searches you’re maintaining per device, that time adds up.

Q: So change management, training, and education are every important messages that we need to consider. Christa, what’s your advice for the audience? How can they transition from manual to automated processes?

A: For the 79% of the audience not currently automating their literature review processes, my advice is to seek a software platform that’s right for you. Get your basics down and lay out a plan. Develop a screening form that aligns with your screening criteria. Don’t try to implement technology without establishing your protocol first. Look at areas where you can standardize your questions and draft a workflow. Run a pilot with a simple structure, reevaluate, and start layering in other more complex elements. The biggest mistake you can make when implementing a new software in an organization is trying to do too much at once. Take a stepwise approach.

We’ve been using DistillerSR for a very long time, and we’re just now starting to layer more complex elements into the process. We wanted it to be stable first and we wanted everyone to be properly trained on it.

Q: Thank you, Christa. An important thing to highlight is that you need to make sure automation works for you, which translates into leveraging automation to achieve transparent and audit-ready records that clearly outline the path to your conclusion. Can you please give the audience an idea of how much time you’re saving using automation?

We did our pilot project many years ago when we transitioned from manual to automated processes, and we saw title and abstract screening time decrease by 50 to 70%, depending on the skill set of the reviewer, and data extraction time decrease by 30%.

“We did our pilot project at Johnson & Johnson many years ago when we transitioned from manual to automated processes, and we saw title and abstract screening time decrease by 50 to 70%, depending on the skill set of the reviewer, and data extraction time decrease by 30%.”

Q: Sara, can you summarize your key takeaway for the audience?

A: Don’t be afraid of transitioning from manual to automated processes. There will be a learning curve, but don’t get discouraged. Cultivate a champion within the organization who believes the end result will be worthwhile, because in the end, the benefit of using an automated platform will far outweigh any resistance you might face throughout the process. Make sure you choose a solution that can scale as your requirements change or expand.

Q: And you, Christa, what’s your key takeaway?

A: Coming off a recent audit, I can tell you that the EU MDR will focus on the literature related to post-market surveillance. The evidence will be scrutinized because it’s one of the main data sources to justify the safety and performance of your medical device. Furthermore, the auditors want to see the objective evidence that supports your exclusion and appraisal criteria to verify that your data is safe and reliable and to ensure your device is performing as intended. If you’re part of an organization fighting hard to get your EU MDR certification, use that argument when requesting funding for automation. Your executives need to understand that it will be very difficult to get through the EU MDR certification process using manual, error-prone processes.

“Executives need to understand that it will be very difficult to get through the EU MDR certification process using manual, error-prone processes.”